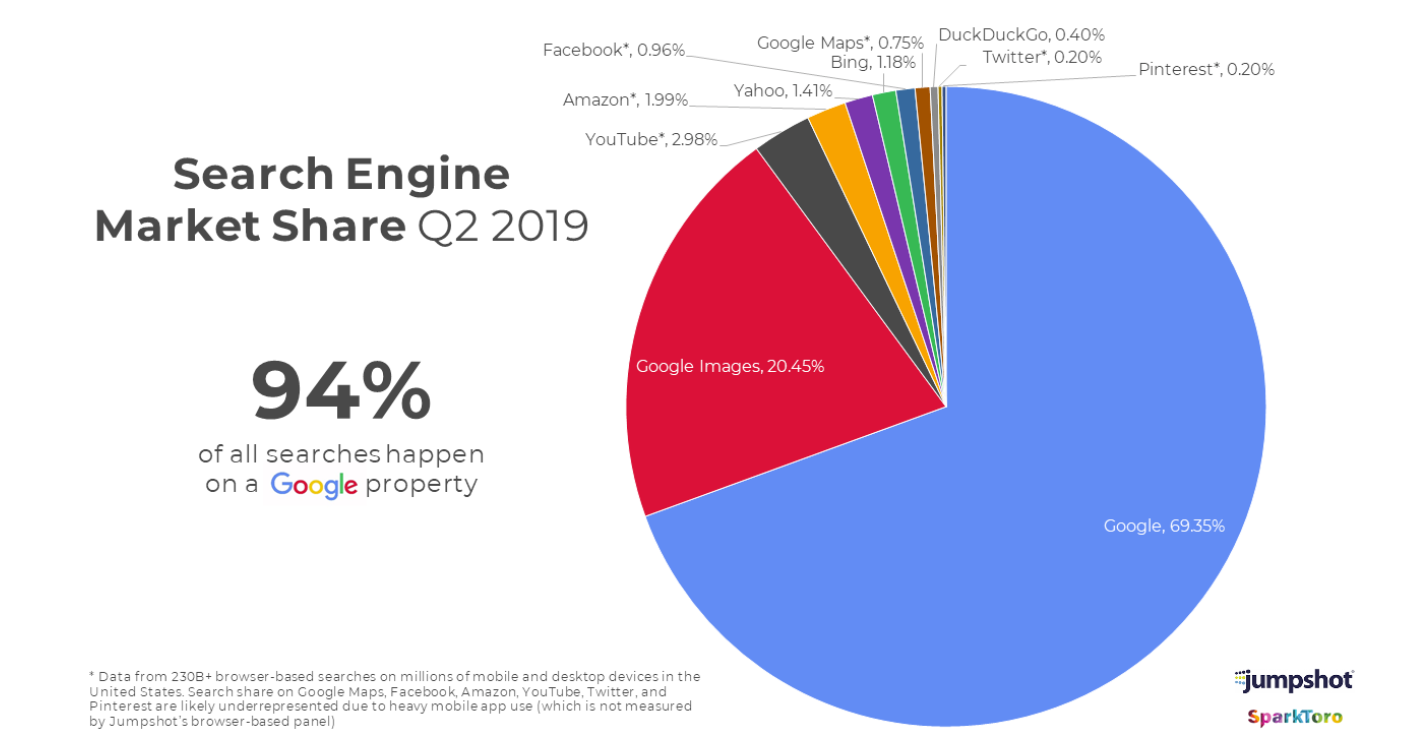

As you know, Google is the most used search engine among the users around the world. From the below image you can see that around 94% of the searches are made in Google by the users around the world. As a normal user, you may think how the Google search functions normally to handle millions of search queries by the normal users every day. Let’s see in more detailed in this blog about the functioning of Google Search.

Image Source – https://www.towermarketing.net/blog/google-best-search-engine/

How Google Search Works?

Google Search Engine undergoes different phases before fetching the search results for the users queries and displaying it on the users’s computer. Below are the two main functions which happens in Google Search when user searches for anything,

- Crawling & Indexing

- Fetching the Search Results

Here crawling and indexing is the first phase where the Google Bot crawls the websites and stores in the indexing server. The second phase is picking the best sites from the indexing server based on the user’s search query and displaying the Search Engine Results Page (SERP). Let’s see the functioning of the each phase in more detail.

Crawling & Indexing

So basically if you create any new website, it should be known by Google search engine, only then Google can able to show your website for the users in search results. So Google uses a bot called Google Crawler or Google Spider to crawl the websites.

Google crawler will crawl all the websites which is available in the internet and will index them. That is it will crawl the web pages and will store them in the indexing server. For example, If Google Crawler visits a webpage, then it will crawl the particular page and will follow the other links from that page to the other page, so by following the links crawler will identify the other pages in the same website or the other website and will index them.

Image Source – https://www.google.com/search/howsearchworks/

Same way the process continues and the Google Crawler will follow the links from one page to the other and will crawl the entire website and the millions of web pages in the internet.

Once the Google bot crawls your website, it will read all the data in your webpage and will store them in the indexing server. Indexing server will contain trillions of web pages crawled and indexed. Based on the several ranking factors, Google Bot will store the web pages in the indexing servers in some order.

This is the primary phase of the Google Search Engine to crawl and index your webpages. If you are having a new website, its recommended to give more internal links, so your webpages will be identified and crawled by the Google bot. Having more internal links in the website will help the Google bots to navigate to the other pages and crawl the site effectively.

Fetching the Search Results

So in the first phase, Google bot will crawl the web pages and will index them. All the web pages are stored in the indexing server based on the ranking factors. So here in the second phase, when the user makes any search on Google, Then Google Search Engine will fetch the best results for the user from the indexing server and then will display it in the search results.

In the index server, the web pages will be stored based on the ranking factors and the webpage with good authority and the relevant information will be given more priority and will be displayed in the top of the search results.

As a business owner or blogger, you should optimize the webpages to rank better on search results. You can also hire the best SEO Consultant in London like Fernando Raymond who is the founder of ClickDo, to optimize your websites for better rankings.

How to submit the website to Google?

As a business owner, once you create a new website, you should make sure that the site is indexed and available in Google. If the site is not indexed yet, then you can create the XML Sitemap for your website and submit your site to Google by using the Google Search Console.

If you are new to this, you can refer the guide written by Dinesh Kumar VM on website creation which is available in SeekaHost. You can find the step by step guide to create a new business website, integrate the search console, create the XML sitemap and submit it to Google Search Engine using the search console.

Google Search Console is a open source platform introduced by Google to help the website owners to submit the site to Google. By using Google Search console, you can also find and fix the errors if you have any in your website.

Submitting the XML sitemap to the Google search console will help the Google Bots to crawl all the posts and pages present in your website. XML sitemap will contain all the URL’s of the posts, pages, media files and the attachments which is present in your website. So it will be easy for the crawlers to index all the links.